Origin M1 Half-Body Demonstrates Real-Time Head-Eye Coordination

Robot Details

Origin M1 • AheadFormPublished

March 23, 2026

Reading Time

3 min read

Author

Origin Of Bots Editorial Team

Coordination Milestone Achieved

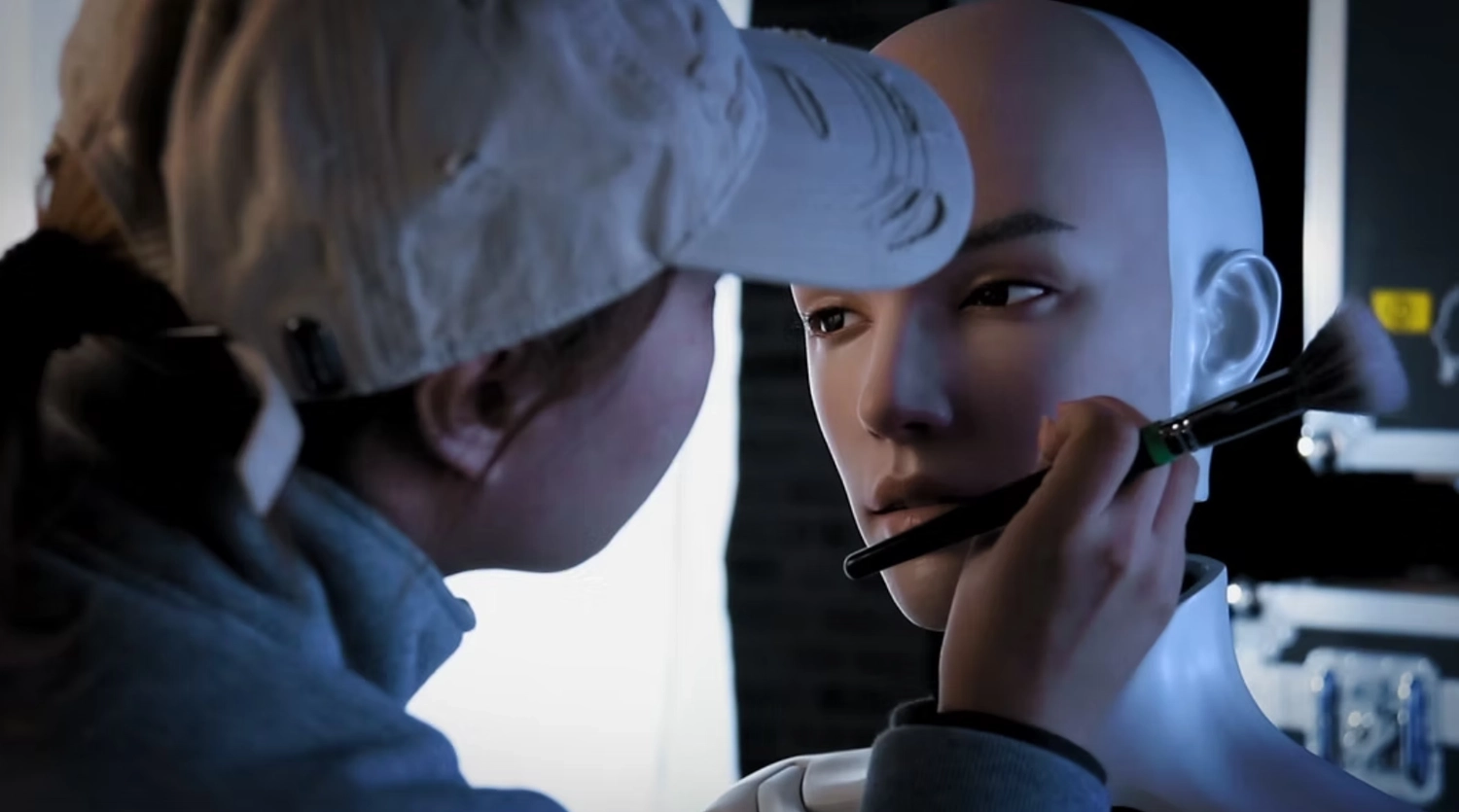

AheadForm's Origin M1 half-body robot captivated observers in a recent Shanghai demonstration during early 2026, showcasing seamless real-time head-eye coordination that tracks moving objects with uncanny precision. This stationary upper-torso platform, evolving from prior head-only prototypes, synchronizes gaze shifts and subtle neck tilts to mimic human attentiveness, transforming passive observation into dynamic engagement. Researchers hailed the feat as a pivotal step for AI embodiment, enabling machines to hold natural conversations while following visual cues, a capability tested rigorously over multiple interaction trials. The display disrupts traditional robotics by prioritizing emotional reciprocity over locomotion.

Expressive Motion Redefined

The Origin M1 elevates interaction through brushless micro-motors driving lifelike facial nuances, blending lip synchronization with gaze tracking for conversations that feel intimately human. Embedded eye cameras capture environmental details, feeding data to AI models that adjust expressions on the fly, whether nodding in agreement or widening eyes in surprise. This half-body design focuses upper-torso fluidity, allowing researchers to deploy it in controlled settings where full mobility distracts from facial fidelity. Recent trials revealed its prowess in sustaining eye contact during extended dialogues, fostering trust in human-robot exchanges far beyond static avatars.

Perception Tech Breakthrough

Visual SLAM powers the Origin M1's gaze system, coordinating head and eye movements without reliance on legs or wheels, a engineering advance rooted in IMU and gyroscope fusion for stable tracking. Microphones capture voice tones, syncing them with visual focus to interpret intent, while proprietary software bridges ROS2 compatibility for custom AI integrations. This setup achieves sub-second response times in demos, outpacing earlier models by enabling predictive glances at hand gestures. AheadForm's innovation lies in minimalist mechanics that amplify perceptual depth, setting new benchmarks for embodied intelligence in compact forms.

Interaction Scenarios Expand

Deployments in HRI labs during late 2025 trials positioned the Origin M1 as a digital companion for therapy sessions, where its coordinated gaze eases patient anxiety through mirrored attentiveness. Educational setups leverage it for teaching social cues, with students practicing interviews against its responsive expressions. Precision instrument handling via upper-body control supports AI research, while safety features like collision detection ensure safe proximity. These applications demonstrate how stationary embodiment delivers profound human-centric outcomes, from rapport-building in healthcare to intuitive learning aids, redefining robot roles without wandering hardware.

Skill Architecture Summary

Eye-embedded RGB cameras and microphones enable nuanced gaze-tracking, empowering empathetic interactions that hold user focus during 3-5 year battery sessions without recharges. IMU-gyroscope stability drives fluid head-eye coordination via Visual SLAM, facilitating natural upper-body gestures for people engagement. At 60x40x30 cm and 15 kg, its stationary frame prioritizes facial dexterity, supporting precision payloads with force-limiting safety and emergency stops. Proprietary OS with ROS2 and Python APIs unlocks HRI research, AI embodiment, and education skills, turning specs into seamless emotional bridges.

Rivals Face-Off

| Robot | Key Advantage | Where Origin M1 Wins | Target Use |

|---|---|---|---|

| Elf-Xuan 2.0 | Full-body agility | Superior gaze sync for interactions | Dynamic performance demos |

| Elf V1 Series | Cost-effective elf designs | Deeper emotional facial realism | Entry-level companionship |

| Q5 | Broad mobility options | Precision head-eye coordination | Research embodiment studies |

| Embodied Tien Kung 2.0 Pro | Advanced full-limb dexterity | Compact, long-life interaction focus | HRI lab experiments |

AI Agents • Custom AI Systems • Robotics Integration • Software Development

Imagine building something like Origin M1We turn that vision into reality

Turn ideas into real, deployed AI solutions. From AI systems and software to advanced robotics integration, we take projects from concept to completion.

If you’re looking to implement AI—not just explore it—we take ownership and deliver results.

Explore Our Services →Sources

Related Articles

4NE1 Gen 3.5 Masters Live CES 2026 Demos with Porsche Design

Unitree R1 Showcases Kung Fu Demos at CES 2026

ALLEX Wows CES 2026 with 40N Handshake Demos, NVIDIA Talks

Atlas Production Sold Out for 2026 Hyundai Deployments

Related Comparisons

Compare Origin M1 with similar robots

Aria - Robot Girlfriend vs Elf-Xuan 2.0

Aria - Robot Girlfriend or Elf-Xuan 2.0? Compare specs, navigation, battery, sensors, and real-world performance.

Walker S1 vs Elf-Xuan 2.0

Head-to-head: Walker S1 vs Elf-Xuan 2.0. Explore differences in navigation, battery life, and sensors.

Bolt vs Titan 01

Head-to-head: Bolt vs Titan 01 compared on bipedal balance, AI learning, payload capacity, and real-world deployment for enterprise robotics decisions.

Bolt vs CyberOne 2026 Version

Bolt by MirrorMe Technology or CyberOne 2026 Version by Xiaomi Robotics Lab? See which humanoid robot performs better for manufacturing, logistics, and research.

Learn More About This Robot

Discover detailed specifications, reviews, and comparisons for Origin M1.

View Robot Details →